Robotics AI on the Edge: Hugging Face's Push for Embedded Platforms

By Libertarian • 2026-03-06 07:13:08

For years, advanced robotics AI has been tethered to the cloud or powerful workstations, limiting its real-world agility and autonomy. Now, a quiet revolution is underway, promising to unshackle intelligent machines and embed sophisticated cognitive capabilities directly into their operational hardware.

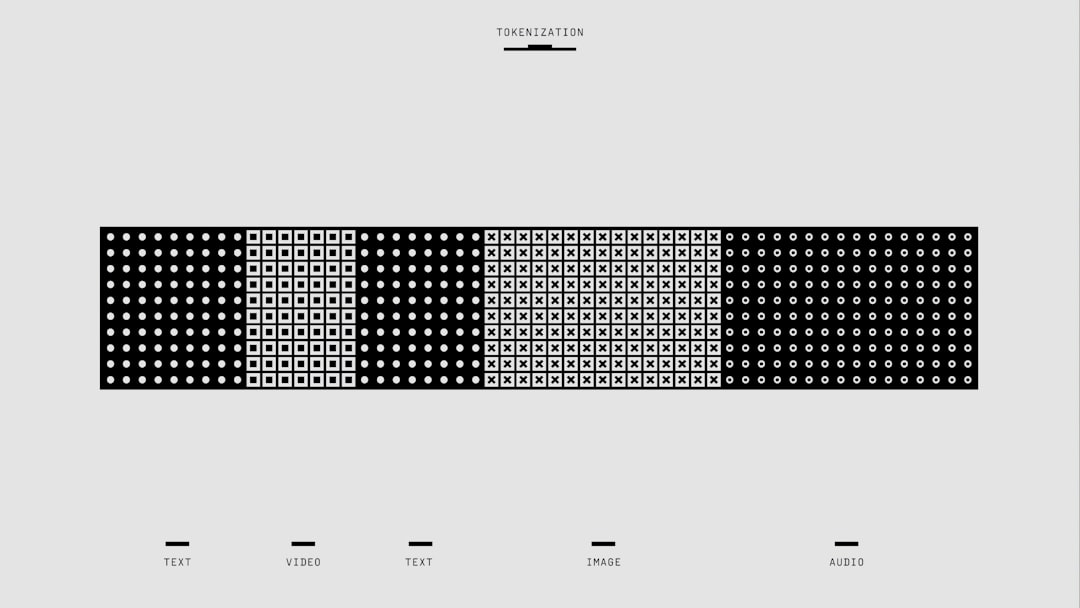

Hugging Face, a prominent force in democratizing large language models, recently unveiled a significant initiative aimed at extending sophisticated robotics AI to embedded platforms. This effort, detailed on their blog, focuses on three critical pillars: streamlined dataset recording for real-world interactions, efficient fine-tuning of Vision-Language-Action (VLA) models, and rigorous on-device optimizations. The overarching objective is to enable complex robotic behaviors, traditionally confined to high-performance computing, to execute directly on resource-constrained hardware, thereby accelerating the adoption and practical deployment of intelligent robots across diverse industries.

The evolution of robotics from early industrial manipulators to sophisticated autonomous agents has been consistently constrained by computational demands. While initial systems relied on precise pre-programming, the integration of advanced sensors and machine learning, spearheaded by platforms like ROS, introduced greater adaptability. However, deploying complex AI, particularly large neural networks for perception and decision-making, invariably required powerful GPU clusters or reliance on cloud infrastructure. This cloud-centric approach, while offering scalability, introduced critical issues: inherent latencies, unreliable connectivity dependencies, and privacy vulnerabilities, severely impeding real-time responsiveness and operational autonomy in critical applications like autonomous vehicles or surgical robotics.

The contemporary robotics landscape demands increasingly intelligent, adaptable, and energy-efficient systems. The advent of Vision-Language-Action (VLA) models, which fuse visual, linguistic, and action data, promises unprecedented generalization and intuitive human-robot interaction. Yet, deploying these multi-billion parameter models onto embedded systems with typical power budgets under 100 watts and limited memory remains a formidable engineering hurdle. While hardware giants like Nvidia (Jetson), Intel (OpenVINO), and Qualcomm (Snapdragon Robotics) offer specialized edge AI accelerators, Hugging Face’s intervention addresses the crucial software stack: providing open-source tools for data collection, model training, and optimization, which are often the primary bottlenecks for developers in practical, real-world deployments.

Hugging Face's initiative has immediate, transformative implications for the robotics community. By standardizing open-source tools for dataset recording and VLA fine-tuning, they are significantly lowering the barrier to entry for researchers and startups. This democratization fosters rapid innovation, enabling a wider range of developers to experiment with and deploy advanced robotic intelligence without needing to build proprietary infrastructure. A small agricultural tech firm, for instance, can now more readily fine-tune a VLA model for crop disease detection using accessible tools. The emphasis on on-device optimization ensures greater autonomy, reduced latency, and enhanced privacy, vital for applications from urban delivery robots to in-home assistive devices.

In the long term, this drive towards embedded robotics AI portends an era of ubiquitous, intelligent machines deeply integrated into our physical environment. Manufacturing facilities could see robots dynamically adapting to production changes without constant cloud reliance. Elderly care robots might interpret complex instructions reliably even during network outages. This shift from cloud-dependent to edge-native AI fundamentally enhances operational reliability and security, as sensitive data processing remains local. It also unlocks novel business models for robotics-as-a-service, simplifying deployment. Furthermore, it intensifies competitive pressure on traditional industrial automation companies to integrate more flexible, AI-driven capabilities, moving beyond rigid programming paradigms.

The immediate beneficiaries are the vast community of robotics startups and academic researchers, gaining access to state-of-the-art tools previously confined to well-funded corporate labs. Companies like Boston Dynamics could leverage these open-source advancements to accelerate specific model deployments. Hardware manufacturers such as Nvidia, Intel, and Qualcomm, whose embedded AI accelerators are critical for on-device optimization, will likely experience increased demand for their specialized chips. Hugging Face itself strengthens its position as a central AI hub, expanding into embodied AI. Ultimately, end-users, from consumers to enterprises, stand to gain from more capable, cost-effective, and robust robotic solutions.

Conversely, companies heavily invested in proprietary, closed-source robotics AI platforms may face increased competitive pressure as open-source alternatives mature. While cloud robotics will retain its role for massive-scale data processing and model training, a significant portion of the market requiring real-time, low-latency decision-making will inevitably shift towards embedded solutions, potentially impacting pure-play cloud robotics service providers. Traditional industrial automation giants, whose business models historically relied on bespoke engineering, must rapidly pivot to integrate these flexible, learning-based AI paradigms or risk being outmaneuvered by agile, AI-native competitors.

Over the next 12 to 24 months, expect a surge in open-source contributions and benchmarks, demonstrating enhanced performance and complexity of robotics AI on embedded systems. The immediate focus will be on further standardization of VLA model architectures and refined on-device optimization techniques, including specialized neural network pruning and quantization. Within three to five years, significant market penetration of robots utilizing sophisticated VLA models on edge devices is predicted across logistics, healthcare, and consumer sectors, driving down operational costs and expanding utility. This will also spur deeper hardware-software co-design, with chip manufacturers integrating AI accelerators even more tightly with VLA-specific processing capabilities.

Hugging Face's strategic move democratizes access to advanced robotics AI, catalyzing a fundamental shift from cloud-centric to edge-native intelligence. This transition promises to unlock unprecedented autonomy and adaptability for intelligent machines, fundamentally redefining their role in industry and everyday life.

Hugging Face, a prominent force in democratizing large language models, recently unveiled a significant initiative aimed at extending sophisticated robotics AI to embedded platforms. This effort, detailed on their blog, focuses on three critical pillars: streamlined dataset recording for real-world interactions, efficient fine-tuning of Vision-Language-Action (VLA) models, and rigorous on-device optimizations. The overarching objective is to enable complex robotic behaviors, traditionally confined to high-performance computing, to execute directly on resource-constrained hardware, thereby accelerating the adoption and practical deployment of intelligent robots across diverse industries.

The evolution of robotics from early industrial manipulators to sophisticated autonomous agents has been consistently constrained by computational demands. While initial systems relied on precise pre-programming, the integration of advanced sensors and machine learning, spearheaded by platforms like ROS, introduced greater adaptability. However, deploying complex AI, particularly large neural networks for perception and decision-making, invariably required powerful GPU clusters or reliance on cloud infrastructure. This cloud-centric approach, while offering scalability, introduced critical issues: inherent latencies, unreliable connectivity dependencies, and privacy vulnerabilities, severely impeding real-time responsiveness and operational autonomy in critical applications like autonomous vehicles or surgical robotics.

The contemporary robotics landscape demands increasingly intelligent, adaptable, and energy-efficient systems. The advent of Vision-Language-Action (VLA) models, which fuse visual, linguistic, and action data, promises unprecedented generalization and intuitive human-robot interaction. Yet, deploying these multi-billion parameter models onto embedded systems with typical power budgets under 100 watts and limited memory remains a formidable engineering hurdle. While hardware giants like Nvidia (Jetson), Intel (OpenVINO), and Qualcomm (Snapdragon Robotics) offer specialized edge AI accelerators, Hugging Face’s intervention addresses the crucial software stack: providing open-source tools for data collection, model training, and optimization, which are often the primary bottlenecks for developers in practical, real-world deployments.

Hugging Face's initiative has immediate, transformative implications for the robotics community. By standardizing open-source tools for dataset recording and VLA fine-tuning, they are significantly lowering the barrier to entry for researchers and startups. This democratization fosters rapid innovation, enabling a wider range of developers to experiment with and deploy advanced robotic intelligence without needing to build proprietary infrastructure. A small agricultural tech firm, for instance, can now more readily fine-tune a VLA model for crop disease detection using accessible tools. The emphasis on on-device optimization ensures greater autonomy, reduced latency, and enhanced privacy, vital for applications from urban delivery robots to in-home assistive devices.

In the long term, this drive towards embedded robotics AI portends an era of ubiquitous, intelligent machines deeply integrated into our physical environment. Manufacturing facilities could see robots dynamically adapting to production changes without constant cloud reliance. Elderly care robots might interpret complex instructions reliably even during network outages. This shift from cloud-dependent to edge-native AI fundamentally enhances operational reliability and security, as sensitive data processing remains local. It also unlocks novel business models for robotics-as-a-service, simplifying deployment. Furthermore, it intensifies competitive pressure on traditional industrial automation companies to integrate more flexible, AI-driven capabilities, moving beyond rigid programming paradigms.

The immediate beneficiaries are the vast community of robotics startups and academic researchers, gaining access to state-of-the-art tools previously confined to well-funded corporate labs. Companies like Boston Dynamics could leverage these open-source advancements to accelerate specific model deployments. Hardware manufacturers such as Nvidia, Intel, and Qualcomm, whose embedded AI accelerators are critical for on-device optimization, will likely experience increased demand for their specialized chips. Hugging Face itself strengthens its position as a central AI hub, expanding into embodied AI. Ultimately, end-users, from consumers to enterprises, stand to gain from more capable, cost-effective, and robust robotic solutions.

Conversely, companies heavily invested in proprietary, closed-source robotics AI platforms may face increased competitive pressure as open-source alternatives mature. While cloud robotics will retain its role for massive-scale data processing and model training, a significant portion of the market requiring real-time, low-latency decision-making will inevitably shift towards embedded solutions, potentially impacting pure-play cloud robotics service providers. Traditional industrial automation giants, whose business models historically relied on bespoke engineering, must rapidly pivot to integrate these flexible, learning-based AI paradigms or risk being outmaneuvered by agile, AI-native competitors.

Over the next 12 to 24 months, expect a surge in open-source contributions and benchmarks, demonstrating enhanced performance and complexity of robotics AI on embedded systems. The immediate focus will be on further standardization of VLA model architectures and refined on-device optimization techniques, including specialized neural network pruning and quantization. Within three to five years, significant market penetration of robots utilizing sophisticated VLA models on edge devices is predicted across logistics, healthcare, and consumer sectors, driving down operational costs and expanding utility. This will also spur deeper hardware-software co-design, with chip manufacturers integrating AI accelerators even more tightly with VLA-specific processing capabilities.

Hugging Face's strategic move democratizes access to advanced robotics AI, catalyzing a fundamental shift from cloud-centric to edge-native intelligence. This transition promises to unlock unprecedented autonomy and adaptability for intelligent machines, fundamentally redefining their role in industry and everyday life.